Getty Photos

On the earth of AI, what is perhaps referred to as “small language fashions” have been rising in recognition lately as a result of they are often run on an area machine as a substitute of requiring information center-grade computer systems within the cloud. On Wednesday, Apple introduced a set of tiny source-available AI language fashions referred to as OpenELM which might be sufficiently small to run straight on a smartphone. They’re principally proof-of-concept analysis fashions for now, however they may kind the premise of future on-device AI choices from Apple.

Apple’s new AI fashions, collectively named OpenELM for “Open-source Environment friendly Language Fashions,” are at the moment obtainable on the Hugging Face below an Apple Sample Code License. Since there are some restrictions within the license, it might not match the commonly accepted definition of “open supply,” however the supply code for OpenELM is on the market.

On Tuesday, we coated Microsoft’s Phi-3 models, which purpose to realize one thing related: a helpful stage of language understanding and processing efficiency in small AI fashions that may run regionally. Phi-3-mini options 3.8 billion parameters, however a few of Apple’s OpenELM fashions are a lot smaller, starting from 270 million to three billion parameters in eight distinct fashions.

Compared, the most important mannequin but launched in Meta’s Llama 3 household consists of 70 billion parameters (with a 400 billion model on the way in which), and OpenAI’s GPT-3 from 2020 shipped with 175 billion parameters. Parameter rely serves as a tough measure of AI mannequin functionality and complexity, however current analysis has centered on making smaller AI language fashions as succesful as bigger ones had been just a few years in the past.

The eight OpenELM fashions are available in two flavors: 4 as “pretrained” (mainly a uncooked, next-token model of the mannequin) and 4 as instruction-tuned (fine-tuned for instruction following, which is extra excellent for growing AI assistants and chatbots):

OpenELM encompasses a 2048-token most context window. The fashions had been educated on the publicly obtainable datasets RefinedWeb, a model of PILE with duplications eliminated, a subset of RedPajama, and a subset of Dolma v1.6, which Apple says totals round 1.8 trillion tokens of knowledge. Tokens are fragmented representations of knowledge utilized by AI language fashions for processing.

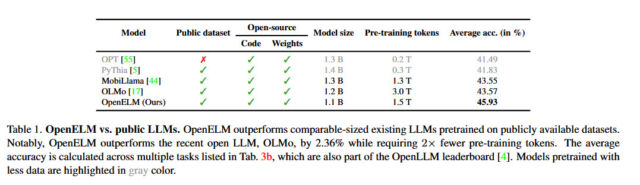

Apple says its strategy with OpenELM features a “layer-wise scaling technique” that reportedly allocates parameters extra effectively throughout every layer, saving not solely computational sources but in addition bettering the mannequin’s efficiency whereas being educated on fewer tokens. In line with Apple’s launched white paper, this technique has enabled OpenELM to realize a 2.36 % enchancment in accuracy over Allen AI’s OLMo 1B (one other small language mannequin) whereas requiring half as many pre-training tokens.

Apple

Apple additionally launched the code for CoreNet, a library it used to coach OpenELM—and it additionally included reproducible coaching recipes that enable the weights (neural community information) to be replicated, which is uncommon for a significant tech firm to date. As Apple says in its OpenELM paper summary, transparency is a key aim for the corporate: “The reproducibility and transparency of enormous language fashions are essential for advancing open analysis, making certain the trustworthiness of outcomes, and enabling investigations into information and mannequin biases, in addition to potential dangers.”

By releasing the supply code, mannequin weights, and coaching supplies, Apple says it goals to “empower and enrich the open analysis neighborhood.” Nevertheless, it additionally cautions that because the fashions had been educated on publicly sourced datasets, “there exists the opportunity of these fashions producing outputs which might be inaccurate, dangerous, biased, or objectionable in response to person prompts.”

Whereas Apple has not but built-in this new wave of AI language mannequin capabilities into its client gadgets, the upcoming iOS 18 replace (anticipated to be revealed in June at WWDC) is rumored to incorporate new AI options that utilize on-device processing to make sure person privateness—although the corporate could probably hire Google or OpenAI to deal with extra advanced, off-device AI processing to present Siri a long-overdue enhance.